Security Assessments

Living baseline, not annual deliverable

What it is

An attacker-eye baseline of your institution's exposure — district by district across the campus city — with manual depth on the systems that actually matter (Workday, SIS, research enclaves, advancement, clinical), and output focused on prioritized remediation you'll actually do this quarter rather than a 400-page findings PDF. Anchored in ACRE so the baseline keeps refreshing instead of going stale the day the report is delivered.

Higher-Education Security Assessment: Calibrated for the Campus City

Generic attack-surface assessments don't understand higher-education. They flag every research subdomain as an “exposed asset” without recognizing that decentralized DNS is the design, not the defect. Our higher-education security assessment approach calibrates against the campus reality: federated identity through InCommon, research-data classifications that span IRB-protected and CUI domains, decentralized IT that's a feature of how universities operate.

The distinction we draw

A security assessment isn't a one-time deliverable. The classic engagement — annual pen test, hand over the PDF, repeat next year — gives you a 12-month-stale picture and a remediation backlog nobody owns. We treat assessment as a living attacker-eye baseline that re-runs against your perimeter every quarter, with periodic manual depth on the systems that warrant it. The output is a prioritized, owned, dated remediation queue — not findings dropped over the wall.

Why now — what attackers are actually doing

Recent and active incident patterns we calibrate manual-depth attention against: SaaS-platform compromises rippling through customer institutions (Canvas outage of May 2026, ripple effects through learning-platform recovery); MFA-fatigue at scale against student populations; DNS / subdomain takeover of forgotten research subdomains; supply-chain compromise via low-attention SaaS vendors that satisfy HECVAT on paper; AI-assisted phishing tuned to faculty research domains. We recalibrate every quarter based on what's actually hitting peer institutions.

The pragmatic pieces we deliver

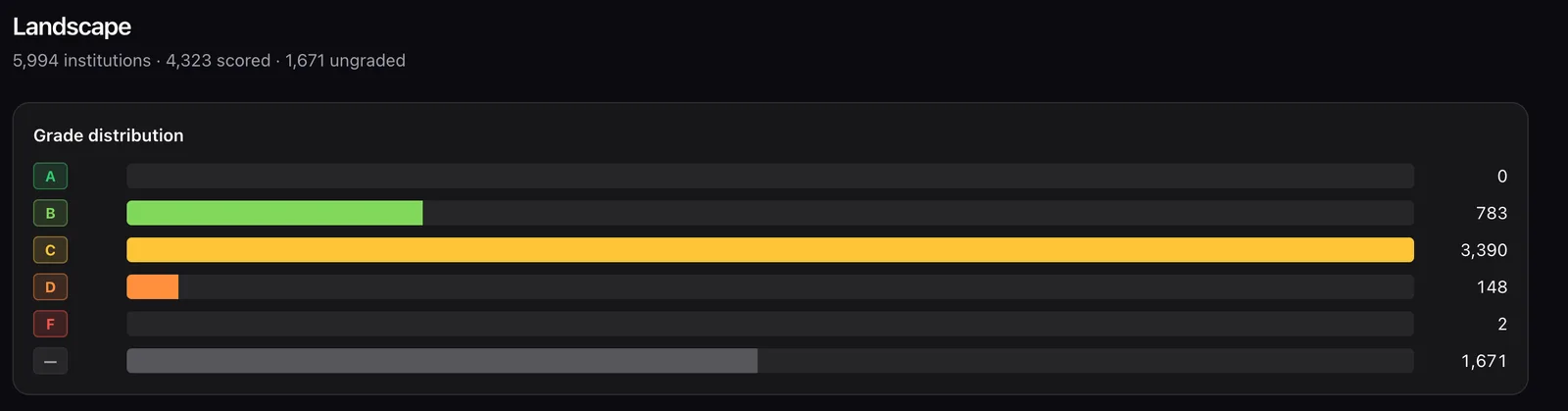

- ACRE A–F report card — per district plus institution-wide, refreshed quarterly, executive-ready.

- Manual-depth findings on the systems that warrant it — Workday, SIS, research enclaves, advancement, clinical, identity provider, M365 / Workspace tenant.

- Prioritized remediation queue — top 10 things to fix this quarter, with effort estimates and owners. Not a findings dump.

- Peer benchmark — anonymized comparison to peers on observable signals.

- Cyber-insurance-ready findings package — formatted for what carriers actually ask for at renewal.

- Pre-audit dry-run — what an external auditor or attacker will find before they look.

- Continuous baseline subscription — quarterly ACRE refresh plus ad-hoc reassessment when you stand up new systems.

Where the products fit

ACREis the engine. The institution gets a refreshed attacker-eye view per district every quarter without re-paying for a new engagement. The first engagement establishes the baseline; subsequent quarters are subscription-pace. Manual depth is our practitioner layer on top — where automation can't see and where attacker reasoning matters.

What “frictionless” means here

The institution knows its real exposure on demand, not annually. Remediation is sequenced so engineering teams know what to pick up Monday morning. New systems get assessed as they're deployed, not after they're breached. The Board question “are we secure?” gets a one-page answer with a current grade. Cyber-insurance renewal is data-driven, not a scramble. When we surface an issue, we stay on it until it's resolved.

Frameworks and references we use

NIST SP 800-30 (risk assessment methodology), NIST SP 800-115 (technical assessment), MITRE ATT&CK threat modeling, CIS Controls v8 assessment criteria. Higher-ed specific: research-enclave assessments (NIST 800-171 plus research-security supplemental controls), assessment patterns for decentralized IT, REN-ISAC threat intelligence, EDUCAUSE community benchmarks.

Engagement shape

4-week express baseline (ACRE-driven, executive-level output) → 10-week deep assessment with manual validation per district → quarterly re-baseline subscription. Research- enclave-specific assessment is a separate 3–6 week engagement with its own scope. Cyber-insurance-renewal support is typically 2–3 weeks.